Designing for a Touchless UX

One thing we’ve learned over the past year is that the public is becoming much more receptive to interacting with digital services hands-free through a touchless UX. Though the technology to do certain tasks without poking a touchscreen has been possible for quite some time, various methods for doing so are becoming more mainstream.

Understanding how touchless interfaces are used today and what’s to come will be for certain development endeavors. Thanks to artificial intelligence (AI) and machine learning (ML), the doors are wide open for new input methods which will eventually become staples in the next evolution of apps we see today in ways we’ve just begun to realistically imagine. Here, we’re going to define touchless UX then look at various technologies used in creating solutions for digital products with a heavy focus on gestures.

What is a touchless UX?

As the name implies, touchless is a kind of umbrella term that’s used to describe any interaction with software that doesn’t require physical touch. Interestingly, it shares challenges similar to those that we observed when cell phones transitioned to completely touchscreen interfaces.

Currently, the most widely accepted and used form of touchless interface is voice control which we have discussed fairly extensively. It’s a core part of every device because of tools like the Android Speech API and iOS Speech Framework to use functions such as Google Assistant and Siri, respectively – because speech-to-text (STT) is easy to initiate on any device (as it has been for several years now), people have slowly adopted this method of input somewhat organically just because “it’s there.”

Speech tools are great for activities like driving because it eliminates the need to physically engage with a device or look away from the road. When it comes to speech-based AI tools, the value is easy to observe. However, other touchless UX designs that are emerging aren’t as familiar so we’ll likely observe some obstacles that will arise because it will take time for potential users to become more comfortable with the idea.

Technologies for touchless UX systems

To get a feel for what a touchless system can bring to the table, let’s look at some of the ways companies are implementing different solutions to offer hands-free systems.

NFC for cashless payments

For years, near-field communication or NFC technology has grown because it’s convenient and secure. Mostly, we’ve used this technology with systems like Google Pay and Apple Pay – your payments are securely exchanged over a short-range radio process through the NFC system built into your device. It’s a bit like WiFi but substantially less range.

The other way we can use NFC is by implanting itty bitty systems into our bodies, which makes some people uncomfortable for obvious reasons. Essentially, these systems are used to store and transmit data that occupies a small footprint like authentication or payment information. During scanning, a unique code is usually required to prevent automatic exchanges when not authorized. Though this technology has been available to the public since around 2017, it hasn’t been widely adopted. But there is this guy who has four chips in his hand that he claims make some processes more convenient and an implanted magnet makes for good entertainment.

And no, no one is adding these chips to vaccines to learn about your life. Currently, NFC and RFID can only store and transmit small amounts of data – though they’re small, most solutions today couldn’t fit through the 23 to 25 gauge needle most professionals typically use to inject a vaccine. They’re also subcutaneous, not intravenous so they stay in one spot (well, ideally.) Further, a system that would be capable of tracking your location would need an embedded system and GPS which would mean a much larger form factor, and unless some kind of experimental nanotechnology was used, it just can’t happen.

Currently, consumers are most comfortable with NFC and RFID systems that are used in our smart devices and through complimentary wearables like bracelets used in the product we developed for Magic Money. So if you’re looking for something in this area, forgo the injection stuff for now.

Computer vision for gesture control

Right now, we mostly use the word “gesture” in the context of how we interface with a touchscreen, for example, double-tapping or using more than one finger while swiping. Eventually, and ideally sooner rather than later, we will start to actualize gesture as a kind of common interface where are movements are captured by camera lenses (the plural is significant here, so stay tuned) interpreted by a computer vision system that relies on ML to identify objects and motion, ultimately allowing users to navigate and manipulate a program.

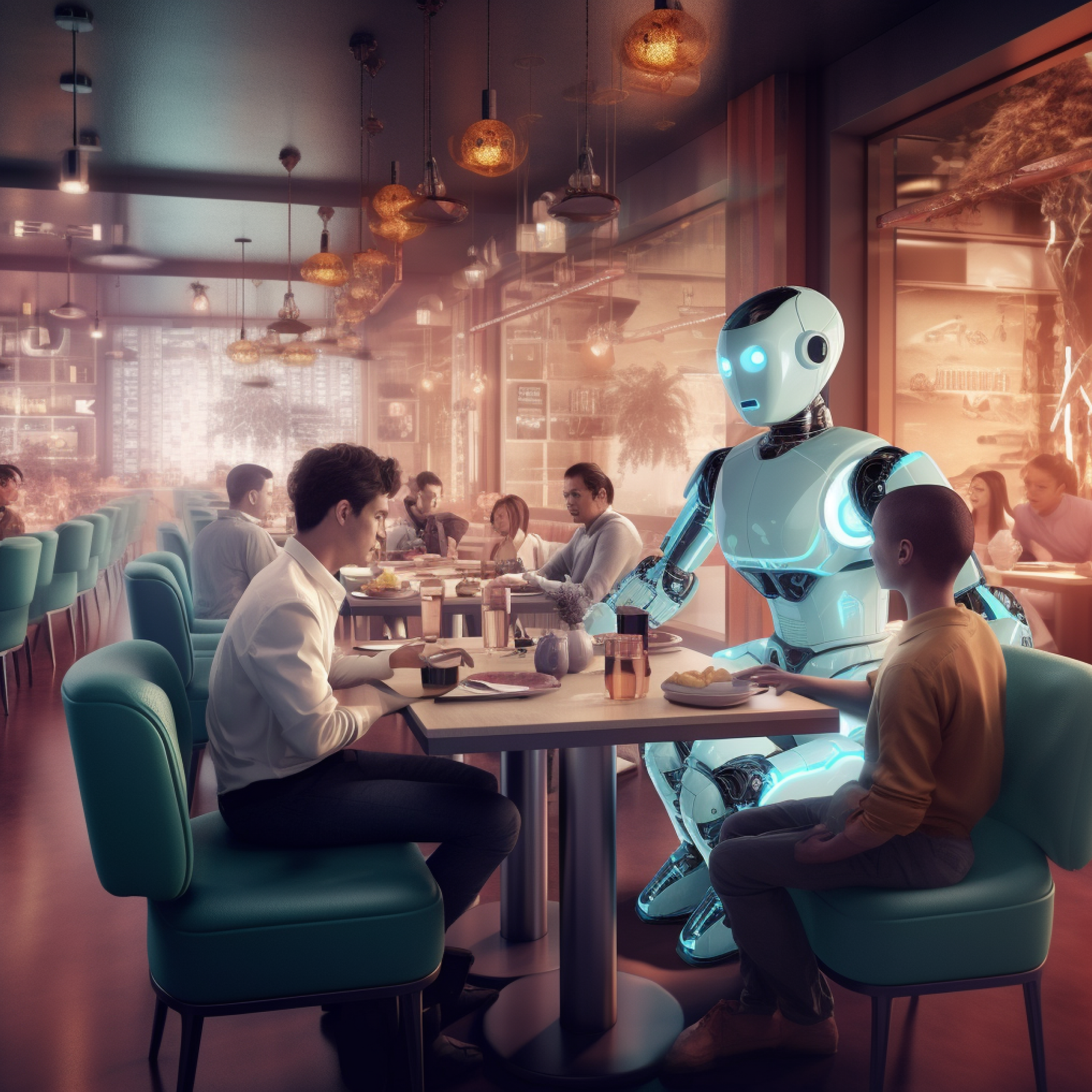

The idea here is that these systems would work a bit like PlayStation Camera or Xbox Kinect except with far utility to add functionality for purposes beyond entertainment. Each had a small library of games that worked in conjunction with the camera, usually providing some kind of augmented reality (AR) functionality. By using two cameras to capture then create a near-3D rendering of your environment, these systems can detect your movements which allows you to interact with superimposed elements you see on your screen. The featured image for this post from touchless kiosk company, UltraLeap, attempts to demonstrate this functionality which also allows you to wholly interface with a system using gestures similar to how you would operate a touchscreen without actual contact.

Unfortunately, neither caught on: Microsoft abandoned the Kinect in early 2018 and PlayStation now uses the camera to support its VR system. Games never reached full potential for any audience – basically, the appeal was mostly to the more casual gamer but with a price point closer to mainstream titles.

The next wave of systems we’re seeing gain traction in the market are those used for identification and “re-identification” (a comprehensive analysis of an object’s physical profile and how it moves through an environment equipped with such a system) in some security camera systems as well as for analyzing movement and form in digital fitness solutions. In the same premise as facial recognition, single-camera systems are being used to capture unique identifiers on an individual such as the vein patterns in the hands and wrist as seen in Amazon One. More sophisticated systems for re-identification sometimes employ more than one camera to mimic stereoscopic vision, ala the game console cameras we mentioned earlier, to profile people and as well as complex, moving things to analyze everything from posture, movement speed, and other physical idiosyncrasies. Some even employ additional thermal imaging for extra data.

In a more tangible example that’s also less sketchy, camera systems are being used in conjunction with fitness apps to provide feedback for users by watching their movements with a camera. The visual data collected by the camera is measured and compared to the “perfect” form (usually, another recording of some individual performing the ideal movements based on well-defined, kinesiological benchmarks) to give users real-time feedback. A good example of a functional solution on the market today is the Onyx app for iOS.

As these technologies mature, we’ll likely see much more advancement in these areas. Eventually, certain things like real, interactive holograms will be possible and cost-effective.

So, when we look at certain depictions from popular fiction like Tony Stark interacting with Jarvis (i.e., a human interfacing with a holographic display using specific gestures to complete certain actions, manipulate data, 3D model a badass new piece of armor, etc.), know that something close to this is entirely possible, literally right now. It’s just that no one has done it just yet, let alone in a commercially viable way.

Blue Label Labs are experts at building touchless UX systems

We seek out challenges in design and outcomes by embracing innovation and confronting the unconventional. You can check out the Sol LeWitt app which is one of our more recent apps that uses computer vision: it can be used to scan installations created by the infamous artist anywhere in the world with a device’s camera to identify the piece and learn more about it. You can also check out PromptSmart where we developed patented voice tracking algorithms that enable individuals to control a teleprompter with their voice. To learn more or discuss your app idea, get in touch with us!